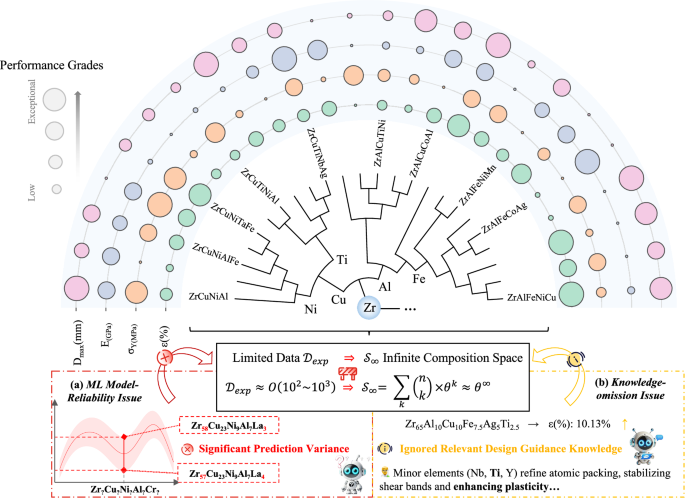

AIMATDESIGN: knowledge-augmented reinforcement learning for inverse materials design under data scarcity

Framework overview

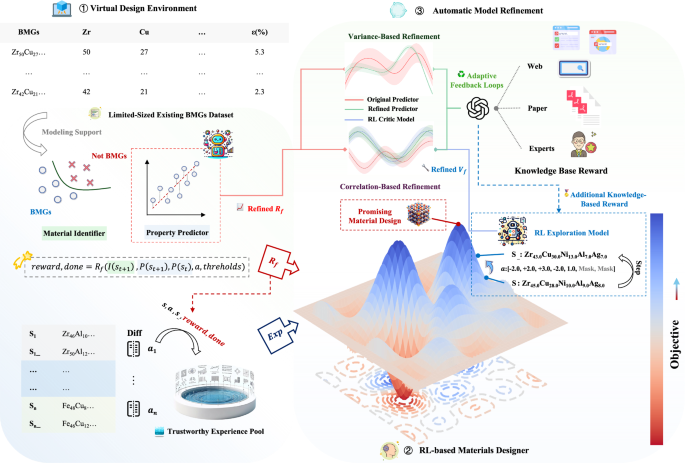

As illustrated in Fig. 2, our proposed framework AIMATDESIGN consists of three key components (Full implementation details are provided in Section “Methods”):

-

1.

Virtual design environment (Section “RL-based material design”). We build a virtual design environment using limited-sized BMGs datasets \({{\mathcal{D}}}_{exp}\), where machine learning models for classification and regression serve as predictive guides. Reward functions are defined based on performance thresholds relevant to target properties. To improve data efficiency, a difference-based strategy is employed to extract a large set of trustworthy experience samples from the original data, forming the foundation for RL training.

-

2.

RL-based materials designer (Section “RL-based material design”). In this environment, the RL agent explores the material composition space (\({{\mathcal{S}}}_{\infty }\)) by performing additive or subtractive operations on material elements. The agent is iteratively trained using reward signals provided by both the virtual environment and LLMs.

-

3.

AMR (Section “Automatic Model Refinement via Adaptive Feedback Loops”). To address potential reliability issues of the ML model or inconsistencies between the ML model’s guidance and the RL agent’s actions during training, LLMs—leveraging expert knowledge drawn from literature, online sources, or domain expertise—are employed to dynamically refine the ML model and correct the RL agent’s exploration path. This ensures the robustness and credibility of the design process.

① Virtual Design Environment, where ML classification and regression models plus a difference-based experience pool define property-centric reward functions; ② RL-based Materials Designer, in which the agent performs additive/subtractive element manipulations across the infinite composition space under combined rewards from the simulator and LLM evaluations; ③ AMR, where LLM-derived expert knowledge iteratively corrects ML model guidance and steers RL exploration to ensure robustness.

Details of each component’s implementation will be elaborated in Section “Methods”. The complete training procedure is summarized in Algorithm 1.

Experimental dataset

As shown in Table 1, the amorphous alloys dataset comprises two subsets: regression and classification. Material composition features are based on 52 alloy elements, with each sample containing 3–9 valid elements (atomic percentages summing to 100%), resulting in a sparse distribution in the high-dimensional feature space. This poses challenges for both feature learning in machine learning models and exploration strategies in reinforcement learning.

The regression dataset includes three performance categories: (1) Geometric properties: maximum diameter (\({D}_{\max }\)); (2) Thermal properties: glass transition temperature (Tg), liquidus temperature (Tl), and crystallization temperature (Tx); (3) Mechanical properties: yield strength (σy), Young’s modulus (E), and elongation (ε). The sample size for geometric and thermal parameters is ~103, while for mechanical properties, it is 102. The dataset includes BMGs and other alloys to improve model generalization.

The classification dataset uses a three-class framework, with ribbon-like metallic glasses (RMG, 3675 samples), crystalline alloys (CRA, 1756 samples), and bulk metallic glasses (BMG, 1433 samples). The BMG class represents 21% of the total, creating an imbalanced distribution. The classification model must handle this imbalance by using probabilistic outputs to quantify the likelihood of a composition being BMG, which aids decision-making in reinforcement learning.

This classification and regression dataset provides essential support for the reinforcement learning environment: classification outputs serve as feasibility constraints, and regression predictions inform the multi-objective reward function, ensuring that generated materials maintain BMG attributes while optimizing overall performance.

Guidance model development

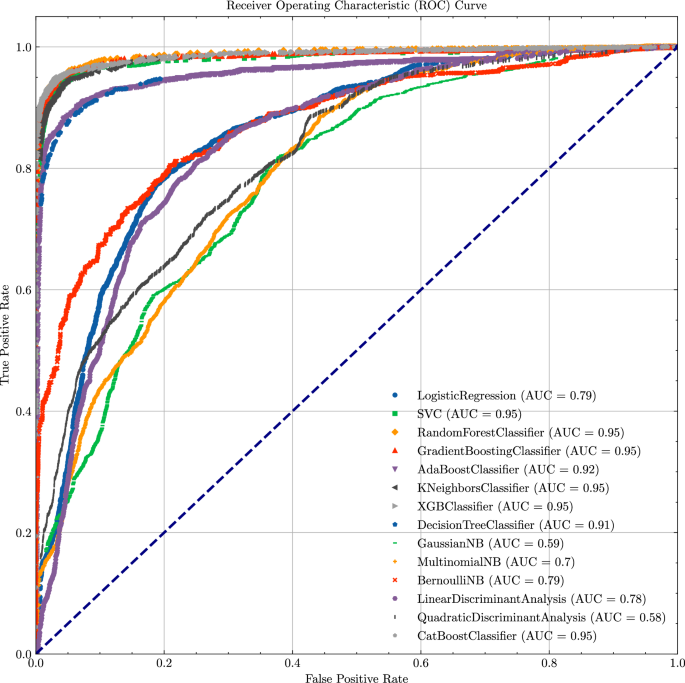

For the material classification task, we construct a probabilistic output classification model \({f}_{c}:{{\mathbb{R}}}^{52}\to [0,1]\), whose output is mapped to the reinforcement learning reward signal rc ∈ [− 0.5, 0.5] through a linear transformation. To address the 21% class imbalance, we use the SMOTE over-sampling technique to augment BMG samples.

We benchmarked representative model families using five-fold cross-validation and highlight, for each metric, the best-performing method. The portfolio covers linear models (LR32, LDA33); kernel methods (SVC34); tree-based learners (DT35, RF36); boosting approaches (GBM37, XGBoost38, CatBoost39, AdaBoost40); distance-based methods (KNN41); probabilistic classifiers (GNB42, MNB43, BNB44); and discriminant analysis (QDA45).

The 5-fold cross-validation performance comparison of classification models is shown in Fig. 3 (detailed metrics in Supplementary Information Section A.1, Supplementary Table S1). Both CatBoost and RF performed best overall. However, CatBoost particularly excelled in the BMG classification task with fewer samples, achieving higher Recall scores, which indicates its ability to identify more BMG samples and effectively avoid missing potential high-value targets during RL exploration. Therefore, we selected CatBoost as the guiding model for the BMG classification task in the virtual environment. Additionally, CatBoost achieved an AUC of 0.95, demonstrating its strong ability to distinguish between positive and negative samples, providing stable and reliable feedback for RL.

ROC curve and AUC for classification models.

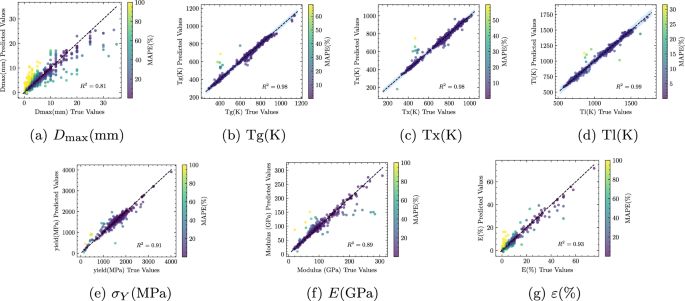

For the regression task, we construct a multi-task regression model \({f}_{r}:{{\mathbb{R}}}^{52}\to {{\mathbb{R}}}^{7}\) for quantifying rewards in the range [0.5, 1]. To ensure prediction accuracy, we evaluated linear regularized models (Ridge46, Lasso47, ElasticNet48), kernel methods (SVR49), tree ensembles (RF Regressor36), boosting (AdaBoost Regressor50, GBM37, XGBoost38), distance-based (KNN Regressor41), and a randomized fast learner (edRVFL51). We used 5-fold cross-validation and report the best-performing model.

Figure 4 shows the performance of the edRVFL model, which performed best. Details for other models and metrics are in Supplementary Information Section A.2, Supplementary Table S2. When predicting geometric properties, thermal properties, and mechanical properties of material compositions, edRVFL outperformed all other models across key metrics (R2, RMSE, and MAPE). Notably, edRVFL improved R2 by over 0.27 for predicting ε(%) and over 0.3 for σY (MPa). Additionally, edRVFL demonstrated stable and high-precision performance in predicting other properties. Therefore, we selected edRVFL as the guiding model for performance prediction in the virtual environment.

a–g Show the predicted values versus the experimental measurements for each property. a Maximum diameter Dmax. b Glass transition temperature Tg. c Crystallization temperature Tx. d Liquidus temperature Tl. e Yield strength σy (MPa). f Young’s modulus E (GPa). g Elongation ε (%). The diagonal dashed line indicates perfect prediction. Each point represents one material sample.

The regression model provides quantifiable feedback for the reward function in the virtual environment, with edRVFL’s high R2 ensuring accurate material property predictions and significantly reducing strategy bias caused by prediction errors, thus enhancing the RL model’s exploration efficiency and reliability in new material design.

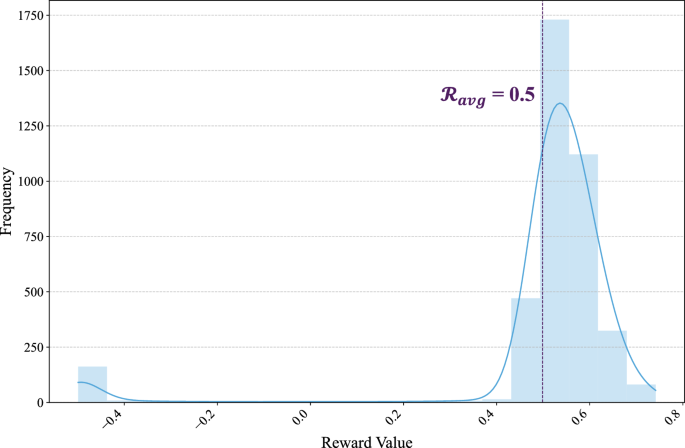

As shown in Fig. 5, the reward values in the experience pool exhibit a unimodal distribution, with over 95% of the samples concentrated in the range [0.4, 0.6], and a mean of 0.5. This distribution indicates that most experience samples provide positive feedback for policy optimization. At the same time, experiences with lower rewards (e.g., in the range [−0.5, 0]) correspond to states where s2’s alloy is non-BMG, representing infeasible solutions discovered during exploration, which provide negative feedback constraints for strategy optimization.

Distribution of reward values in the TEP.

As detailed in Methods (Section “RL-based material design”), we apply a reward-aware replacement strategy that swaps part of each training batch with higher-reward samples from the TEP. This simple adjustment accelerates exploration and leads to faster, more stable convergence in subsequent epochs.

RL design results

Experimental setup

We first analysed the dataset from Section “Experimental dataset” and identified 35 exploration bases, corresponding to the most prevalent elements in the compositions. Based on the compositional ranges provided by the dataset, we set component limits for each base and randomly generated an initial base within this range as the starting state S0 for the RL process.

During RL training, each epoch consists of up to 128 steps (terminated early if the stopping condition is met), with a total of 1000 epochs. Therefore, the theoretical compositional space explored by the RL method is 128 × 1000. For fairness, the number of ML predictions used by traditional optimization algorithms during the search process is also set to 128 × 1000.

Regarding the AMR and KBR components, the LLMs used were GPT-4o-2025-03-2652.

Additional implementation details, including the modelling procedures, are provided in Supplementary Information Section B; the KBR and AMR prompt templates are listed in Supplementary Information Section C.

Baselines

To validate the effectiveness of our proposed approach, we compared it against a range of representative methods that have been applied in recent materials design studies. The baseline methods are categorized into three groups:

-

1.

Traditional inverse design algorithms: Classical search and evolutionary methods such as grid search and NSGA-II18,19,20,21 have been widely employed in alloy and compound design. Grid search provides exhaustive coverage but suffers from poor scalability, while NSGA-II offers efficient multi-objective optimization though it can be sensitive to population initialization and parameter settings.

-

2.

Active learning (AL): AL frameworks22,23,24 iteratively select the most informative candidates to accelerate experimental discovery. They are advantageous in reducing labelling cost, but their performance is often limited in small-sample scenarios due to model bias and uncertainty estimation errors.

-

3.

Reinforcement learning (RL): RL has recently been introduced into inverse materials design29, enabling agents to actively explore compositional spaces beyond greedy exploitation. We considered both value-based methods such as DQN53, which leverages Q-value learning but may face stability issues, and policy-based methods including DDPG54, TD355, SAC56, and PPO57, which generally offer better exploration efficiency and robustness in continuous design spaces.

Evaluation metrics

To comprehensively evaluate the performance of each method in materials design, the following key metrics were used in the experiments:

-

SRlegal: The step-level success rate of generating samples that satisfy material design legality constraints. (Since traditional design methods have predefined component ranges, SRlegal is not reported for these methods).

-

SRcls: The step-level classification success rate of generating samples belonging to the target material class (e.g., BMG).

-

SR80%: The step-level success rate of generating samples that meet the top 80% of key performance indicators in the original dataset, including maximum diameter (\(D\max\)), glass transition temperature ratio (Tg/Tl), yield strength (σY), Young’s modulus (E), and elongation (ε(%)).

-

SRdone: The epoch-level success rate of generating samples that simultaneously meet all design objectives by the end of each training epoch.

The experimental results, shown in Table 2, demonstrate that our model exhibits significant advantages in multi-objective inverse materials design. It achieves near-theoretical limits in both legality constraint success rate (SRlegal = 99.65%) and material classification success rate (SRcls = 99.12%), validating its precise control over complex compositional constraints.

Compared with Grid Search/NSGA-II, AL generally outperforms traditional search but still falls short of our framework across metrics. Under small-sample conditions, ML-only design strategies are prone to model bias and unstable generalization; by contrast, our framework couples RL-driven strategic exploration with LLM-guided corrections, mitigating these limitations.

For the key performance indicator (SR80%), our framework shows improvements of over 6 percentage points compared to the best-performing RL baseline in yield strength (σY(MPa) = 46.93%) and elongation (ε(%) = 49.38%). Furthermore, the overall success rate (SRdone = 50.32%) is 3.4 times higher than that of the traditional evolutionary algorithm NSGA-II, highlighting the efficient exploration capabilities of reinforcement learning in continuous high-dimensional spaces.

It is also noteworthy that, under the same number of ML predictions (128,000), traditional methods exhibit a very low proportion of valid samples (below 15%) due to their reliance on random or exhaustive search. In contrast, our model leverages a dynamic policy network for goal-directed compositional generation, providing a substantially more efficient pathway for high-cost material experiments.

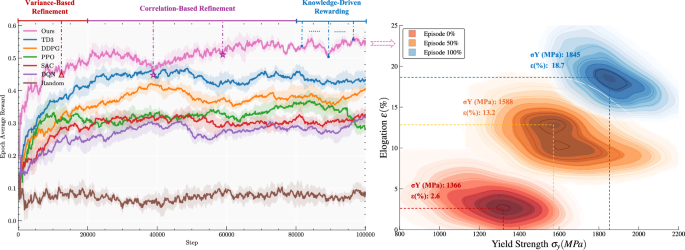

In the left half of Fig. 6, the model demonstrates a significant improvement in convergence speed through the TEP, with an average reward increase of 0.1 in the first 5000 training steps compared to the TD3 algorithm. During training, two optimization mechanisms are triggered sequentially: Variance-Based Refinement at episode 201, and Correlation-Based Refinement at episodes 398 and 503. The experimental results show that without model optimization, the reward metric declines due to the performance limitations of the initial machine learning guiding model (e.g., a decrease of 0.05 at episode 398). However, after optimization, the model performance improves significantly. In the later training stages (last 20% of steps), the introduction of the KBR facilitates secondary optimization of the converged model, leading to a 0.05 increase in the reward curve.

Left – Reward progression of RL-based models. Right – performance evolution of the AIMATDESIGN model.

The right half of Fig. 6 shows that as AIMATDESIGN Model training progresses, the distribution of the generated BMGs materials’ E(GPa) and σY(MPa) performance continuously shifts towards the upper-right region of the coordinate system. The average elastic modulus (E) increases by 18.7%, forming a clear trend of performance improvement.

AMR results

Experimental setup

The refinement strategies were supported by GPT-4o-2025-03-2652, which interacted with the model using the current predictions and a material knowledge base to select 1–3 features from the candidate pool58. If optimization failed to meet expectations, up to three iterations were performed before abandoning the attempt.

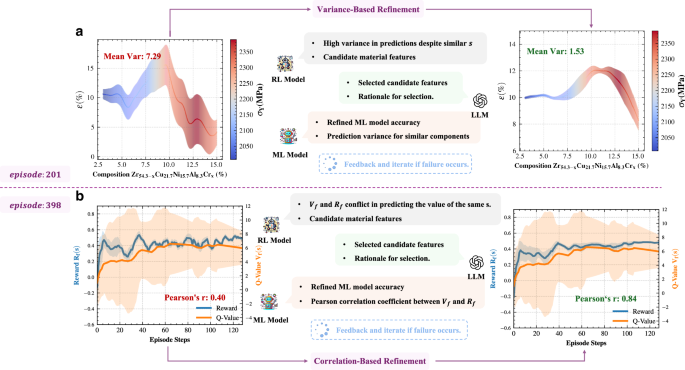

Figure 7 shows the experimental results obtained using the Variance-Based and Correlation-Based refinement strategies in AIMATDESIGN training.

-

Variance-Based Refinement: In the 201st iteration, the model’s prediction of elongation (ε) showed high variance (mean squared error of 7.29). Through iterative interaction with LLMs, a set of material features was selected from the candidate feature pool and the ML model was retrained, effectively reducing the variance in this region to 1.53, thus minimizing the potential uncertainty caused by high variance.

-

Correlation-Based Refinement: In the 398th iteration, the correlation between the reward curve provided by the ML model and the Q-value curve predicted by the RL model was low (Pearson correlation coefficient of only 0.40). After LLMs’ interactive analysis and selecting applicable material features, the correlation coefficient was successfully increased to 0.84. This not only ensured consistency between the two models but also significantly reduced the fluctuations in the reward and Q-value, thereby enhancing the overall decision stability.

a Variance-Based Refinement, reduces local prediction variance for elongation (ε); b Correlation-Based Refinement, enhances consistency between the ML model’s reward and the RL model’s Q-value.

Overall, Variance-Based Refinement targets regions with high local variance, optimizing prediction accuracy at a fine-grained level, while Correlation-Based Refinement aims to improve the correlation between global performance metrics to enhance decision consistency between the RL and ML models. Together, these strategies complement each other, providing strong support for efficient exploration and reliable decision-making in RL-based new materials design.

Ablation study

Table 3 compares the performance of the full model with models where certain components (TEP, AMR, and KBR) are removed. The results show that removing any component leads to a decline in overall performance, while the full model performs best across several key metrics, confirming the positive contribution of each component to the overall framework.

Specifically, the “w/o AMR” model shows a 4.5% decrease in SRdone, indicating that the AMR process provides an effective feedback mechanism for both the ML and RL models, significantly impacting the final material design success rate.

Additionally, because the introduction of Correlation-Based Refinement occurs at a fixed point in time, the convergence speed of RL is crucial for subsequent model refinement and design capability. Removing the TEP (“w/o TEP”) slows down early-stage RL convergence, making it more difficult to fully leverage later refinement, resulting in a lower success rate compared to the full model. On the other hand, “w/o KBR” performs well on local prediction tasks but lags behind the full model in overall success rate (SRdone).

These results further confirm that the performance improvements observed in AIMatDesign are not solely attributable to the LLM modules. Instead, they arise from the joint effect of the RL framework and LLM-guided refinements. Specifically, the RL backbone with the TEP provides the primary exploration capability and stable convergence, while AMR enhances the reliability of ML guidance and KBR adds a small late-stage bias toward domain-consistent solutions. Table 3 shows that removing any component reduces performance, underscoring that the best outcomes emerge only when these modules are combined. In summary, the full model achieves more balanced and superior performance across all metrics, demonstrating the critical importance of the synergistic effect of the three components for multi-objective optimization and reliable decision-making in RL-based new materials design.

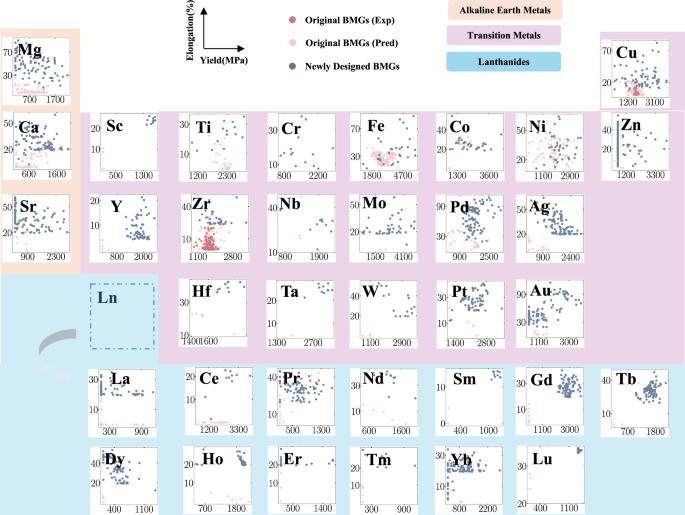

Design results

To validate the applicability of the proposed method across different base materials, we conducted 100 training epochs on 35 representative metal bases and recorded the SRdone for each base. The results are displayed in the Fig. 8, with alkaline earth metals (orange), transition metals (purple), and lanthanide elements (blue) showing the distribution of target performance during the exploration process.

Distribution of σY(MPa) performance (x-axis) and ε(%) (y-axis) across 35 representative metal bases during the design exploration process.

The results, shown in Fig. 8, highlight substantial differences in design difficulty across elements: base elements such as Au, Zn, and Ag achieve an SRdone of 100% or close to it, whereas bases like Sm and Ta exhibit markedly lower SRdone values. This difference is partly due to the inherent chemical properties and feasible space variations of each element, and also reflects the reinforcement learning strategy’s adaptability, which is still constrained by initial conditions and design constraints.

In multiple experiments, the overall average success rate of the method was 54.8%. Notably, even for bases with lower success rates (e.g., Hf, Nb), feasible solutions were still found within a smaller range. This demonstrates that the proposed method can provide stable, high success rates for materials with “easy-to-explore” design spaces, such as noble metals and alkaline earth metals, while also possessing the ability to uncover potential feasible solutions in more challenging material bases (e.g., rare earth or transition metals). This approach balances broad search capabilities with deep exploration, offering valuable insights for future RL-based material design iterations.

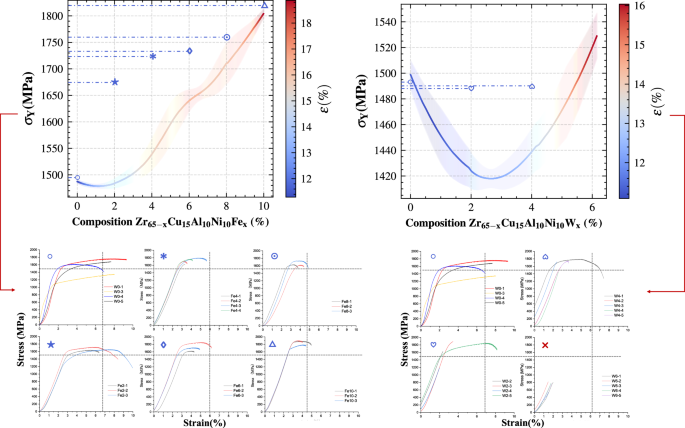

For a more rigorous assessment of AIMATDESIGN, we selected the two Zr-based BMG cluster centres obtained by k-means59 in Section “RL design results”—Zr63Cu15Al10Ni10Fe2 and Zr63Cu15Al10Ni10W2—together with their neighbouring compositions (top panel in Fig. 9), for experimental validation (bottom panel in Fig. 9). The Zr system was chosen because it accounts for the largest share of the original database, yielding the highest model confidence.

Top: Predicted yield strength (σY) and plastic strain (ε) trends for Zr65−xCu15Al10Ni10Fex (left) and Zr65−xCu15Al10Ni10Wx (right). Bottom: Experimental stress-strain curves. Fe alloying raises σY monotonically while ε peaks at x = 2 (10.2%). In contrast, W alloying yields minor strength fluctuations at x = 2~4 but a pronounced strength drop and near-zero ductility at x = 6, implying partial crystallisation.

All specimens were produced by single-step suction casting without post-heat treatment; room-temperature compression tests were performed at a strain rate of 10−4, s−1 (Table 4). The average relative error between predicted and experimental yield strength, σY, is only 4.9%, and Fig. 9 confirms that the experimental σY trend mirrors the AIMATDESIGN prediction.

By contrast, the measured plastic strain ε is systematically lower than predicted owing to two factors:

-

(i)

Most training data were taken from literature values for mechanically polished, diameter-optimised cylindrical samples, whereas the present one-step cast plates exhibit surface defects and residual stresses that were not explicitly modelled.

-

(ii)

Process parameters are often missing from the source literature, preventing the model from capturing processing-microstructure-ductility couplings.

Even so, the Zr63Cu15Al10Ni10Fe2 sample achieved an experimental ε of 10.2%, demonstrating that AIMATDESIGN can deliver BMGs whose yield strength agrees closely with predictions while retaining appreciable ductility without further processing. This closed-loop validation underscores the engineering feasibility of the framework and establishes a paradigm for subsequent iterations on more challenging base alloys.

link