Learning design-score manifold to guide diffusion models for offline optimization

In this section, we first introduce preliminaries on offline optimization, the basics of diffusion models, baseline methods and performance metrics. Subsequently, to show the motivation and advantages of ManGO, we compare the learned versus the original design-score manifold and visualize the trajectory generation. We then conduct extensive experimental validation on offline SOO and MOO using Design-Bench [6] and Off-MOO-Bench [14]. Finally, we perform systematic ablation studies to analyze the contributions of ManGO’s core components.

Preliminaries

Offline optimization [6], also referred to as offline model-based optimization, seeks to identify an optimal design x* within a design space \({\mathcal{X}}\subseteq {{\mathbb{R}}}^{d}\) without requiring online evaluations, where d denotes the design dimension. Based on the number of objective functions \({\boldsymbol{f}}(\cdot )=({f}_{1}({\boldsymbol{x}}),\ldots ,{f}_{m}({\boldsymbol{x}})):{\mathcal{X}}\to {{\mathbb{R}}}^{m}\), offline optimization can be classified into two types [14]: (i) offline SOO when m = 1, and (ii) offline MOO when m > 1.

Offline SOO aims to identify the optimal design \({{\boldsymbol{x}}}^{* }=\arg \mathop{\min }\limits_{{\boldsymbol{x}}\in {\mathcal{X}}}f({\boldsymbol{x}})\) using only a pre-collected offline dataset \({\mathcal{D}}={\{({{\boldsymbol{x}}}_{i},{y}_{i})\}}_{i = 1}^{N}\), where xi denotes a specific design (also referred to as a solution) and yi = f(xi) represents its corresponding score (or objective value). Offline MOO aims to identify a set of designs that achieve optimal trade-offs among conflicting objectives using a pre-collected dataset \({\mathcal{D}}={\left\{({{\boldsymbol{x}}}_{i},{{\boldsymbol{y}}}_{i})\right\}}_{i = 1}^{N}\), where yi denotes the vector of scores corresponding to design xi. The problem is defined as [15]: \(\,\text{Find}\,{{\boldsymbol{x}}}^{* }\in {\mathcal{X}}\,\text{such that}\,\nexists {\boldsymbol{x}}\in {\mathcal{X}}\,\text{with}\,{\boldsymbol{f}}({\boldsymbol{x}})\prec {\boldsymbol{f}}({{\boldsymbol{x}}}^{* }),\) where ≺ denotes Pareto dominance. A design \({{\boldsymbol{x}}}^{{\prime} }\) is said to Pareto dominate another design x, denoted as \({\boldsymbol{f}}({{\boldsymbol{x}}}^{{\prime} })\prec {\boldsymbol{f}}({\boldsymbol{x}})\), if \(\exists i\in \{1,\ldots ,m\},{f}_{i}({{\boldsymbol{x}}}^{{\prime} }) < {f}_{i}({\boldsymbol{x}})\) and \(\forall j\in \{1,\ldots ,m\},{f}_{j}({{\boldsymbol{x}}}^{{\prime} })\le {f}_{j}({\boldsymbol{x}}).\) Namely, \({{\boldsymbol{x}}}^{{\prime} }\) is superior to x in at least one objective while being at least as good in all others. A design x* is Pareto optimal if no other design \({\boldsymbol{x}}\in {\mathcal{X}}\) Pareto dominates x*. The set of all Pareto optimal designs is referred to as the Pareto set (PS), and the set of their scores {f(x*)∣x* ∈ PS} constitutes the Pareto front. The goal of offline MOO is to identify the PS using a pre-collected dataset, thereby achieving optimal trade-offs among conflicting objectives.

Diffusion models are a type of deep generative models that learn to reverse a gradual noising process, transforming random noise into realistic data through iterative denoising. Let xt denote the state of a data sample x0 at time t ∈ [0, T], where x0 is drawn from an unknown data distribution p0(x). Here, xt represents a noisy version of x0 at time t, and xT corresponds to a point sampled from a prior noise distribution pT(x), typically chosen as the standard normal distribution \({p}_{T}({\boldsymbol{x}})={\mathcal{N}}({\boldsymbol{0}},{\boldsymbol{I}})\).

The forward diffusion process, also known as the noise-adding process, can be modeled as a stochastic differential equation (SDE) [16]: dx = f(x, t)dt + g(t)dw, where w denotes the standard Wiener process, \({\bf{f}}:{{\mathbb{R}}}^{d}\to {{\mathbb{R}}}^{d}\) is the drift coefficient, and \(g(t):{\mathbb{R}}\to {\mathbb{R}}\) is the diffusion coefficient of xt. The denoising process is defined by the reverse-time SDE: \(d{\boldsymbol{x}}=\left[{\bf{f}}({\boldsymbol{x}},t)-g{(t)}^{2}{\nabla }_{{\boldsymbol{x}}}\log {p}_{t}({\boldsymbol{x}})\right]dt+g(t)d\tilde{{\boldsymbol{w}}},\) where dt represents an infinitesimal step backward in time, and \(d\tilde{{\boldsymbol{w}}}\) is the reverse-time Wiener process.

Baseline methods and performance metrics

We consider existing baseline methods for offline SOO based on three methodological paradigms: (i) Surrogate-based methods: optimizing with surrogate models, including BO-qEI [17, 18], CMA-ES [10], REINFORCE [19], Gradient Ascent and its variants of mean ensemble and min ensemble. (ii) Forward-modeling methods: employing advanced neural networks like generative models as surrogate models and integrating with surrogate-based methods, including COMs [20], RoMA [21], IOM [22], BDI [11], ICT [23], Tri-Mentoring [24], PGS [25], FGM [26], Match-OPT [27], and RaM [28]. (iii) Inverse-modeling methods: applying score as a condition to reverse design with generative models, including CbAS [29], MINs [30], DDOM [12], BONET [31], and GTG [32].

For offline MOO, existing approaches remain relatively under-explored compared to offline SOO. Our evaluation focuses on three representative approaches: (i) Multiple Models (MMs)-based NSGA-II: We implement NSGA-II with independent objective predictors and perform predictors’ ensemble as the surrogate model for evolutionary optimization, which outperforms end-to-end and multi-head variants [14]. (ii) Multi-objective Bayesian Optimization (MOBO): We adapt the canonical MOBO by substituting Gaussian Processes with the MM ensemble and employ an HV-based acquisition function, qNEHVI [33], which outperforms scalarization and information-theoretic alternatives [14]. (iii) Generative methods: ParetoFlow [34], a flow-model-based method utilizing adaptive weights for multiple predictors to guide flow sampling toward PF; MO-DDOM, a diffusion-model-based method where we extend DDOM through multi-score conditioning and adding MM-based design evaluation.

In offline optimization, where environment interaction is prohibited, it is essential to evaluate multiple candidate solutions; thus, standard benchmarks adopt k-shot evaluation with the 100th percentile (best candidate) as the performance metric [6, 14]. All results are normalized using task-specific references for comparison: For Design-Bench with maximization tasks (Table 1), we use standardization normalization for the Superconduct task and min-max normalization for other tasks based on the unobserved dataset’s highest score. For the Off-MOO-Bench with minimization tasks (Tables 2), we use min-max normalization with the best HV and IGD values of training datasets.

Motivation and Advantages of ManGO

Compared to conventional manifold learning, our diffusion-based approach provides unique advantages for offline optimization. Unlike conventional methods like kernel-based methods that struggle with complex nonlinear geometries [35], diffusion models excel at capturing intricate manifold structures through stochastic denoising. Crucially, diffusion models inherently support conditional generation, enabling direct generation of high-performing designs conditioned on target scores-a capability absent in standard manifold learning. Furthermore, compared to GANs or VAEs, diffusion models offer superior training stability and generation fidelity [36], which are critical for reliable optimization from offline data. This combination of strong representational capacity and built-in conditional generation makes diffusion models suited for learning the design-score manifold.

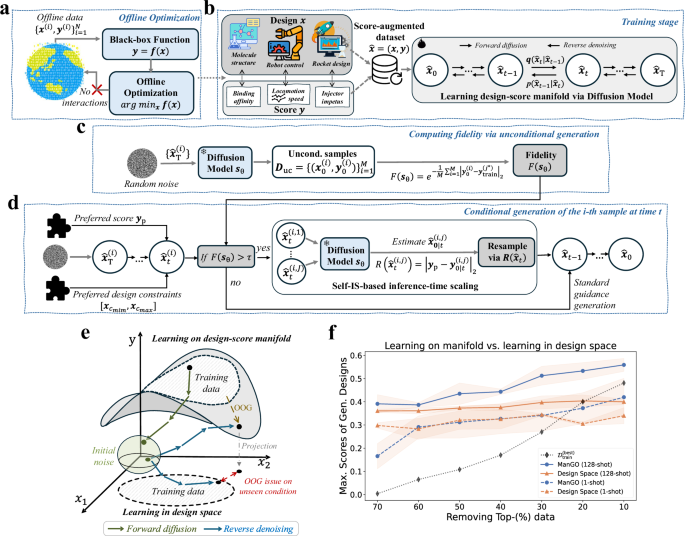

Compared to existing methods for offline optimization, our proposed ManGO framework explicitly learns the design-score manifold and leverages the underlying manifold geometry to co-generate designs and scores. Specifically, ManGO learns a bidirectional generation between designs and scores: (i) Design-to-Score Prediction: Given any design configuration, ManGO predicts its corresponding score; (ii) Score-to-Design Generation: For any preferred score, ManGO generates its corresponding design. As illustrated in Figure 1e, this bidirectional mapping provides ManGO with a robust OOG capability to extrapolate beyond training distributions. Let \({\hat{{\boldsymbol{x}}}}_{t}=({{\boldsymbol{x}}}_{t},{{\boldsymbol{y}}}_{t})\) denotes a score-augmented design vector at timestep t, where x and y represent the design and its score, respectively. The denoising update can be represented as:

$$\hat{{\boldsymbol{x}}}_{t – 1} = ({{\boldsymbol{x}}}_{t},{{\boldsymbol{y}}}_{t} )+ \underbrace{({{\Delta}} {{\boldsymbol{x}}}_{t}, {{\Delta}} {{\boldsymbol{y}}}_{t})}_{{\text{Co-update}}}=\frac{1}{2}\underbrace{({{\boldsymbol{x}}}_{t}+2{{\Delta}} {{\boldsymbol{x}}}_{t}, {{\boldsymbol{y}}}_{t})}_{{\rm{Design}}\,{\rm{update}}} + \frac{1}{2}\underbrace{({{\boldsymbol{x}}}_{t}, {{\boldsymbol{y}}}_{t}+2{{\Delta}} {{\boldsymbol{y}}}_{t})}_{{\rm{Score}}\,{\rm{update}}},$$

where the co-update term indicates the denoising update based on the learned manifold geometry, the design-update term indicates the denoising update of xt conditioned on yt, and the score-update term indicates the denoising update of yt conditioned on xt. Unlike the design-space approaches that treat yt as a fixed condition and only update xt unidirectionally, ManGO jointly updates both xt and yt based on the bidirectional mapping. The design-space methods struggle with extrapolation under unseen conditions. In contrast, ManGO leverages co-updates to dynamically capture the geometric relationship between design and score: each denoising step not only pushes xt toward the local manifold conditioned on yt (design-update) but also refines yt to align with the evolving xt (score-update). This bidirectional feedback enables progressive extrapolation and converges to the conditioned points on the manifold, achieving a robust OOG capability.

We conduct a controlled experiment to elucidate the advantage of the bidirectional mapping of ManGO on a superconductor [37] task, an 86-D materials design optimization task to maximize a critical temperature, using DDOM [12] as the design-space baseline. In terms of the 128-shot evaluation, Figure 1f shows that ManGO achieves a consistent score gain of greater than 0.1 over the design-space approach across varying levels of top-data removal (from 70% to 10%). The gain grows to nearly 0.2 when only 10% of the top data are removed. Regarding the 1-shot evaluation, both methods exhibit comparable performance under severe data removal (from 70% to 30%). However, ManGO demonstrates progressively better results as data availability increases. At 10% data removal, ManGO achieves higher scores than the design-space method with 128 shots. These results confirm that: (i) ManGO captures the bidirectional design-score relationships, enabling robustness to OOG challenges; (ii) ManGO exhibits nonlinear scaling of sample efficiency with data quality, achieving superior few-shot performance.

Manifold and trajectory generation visualization

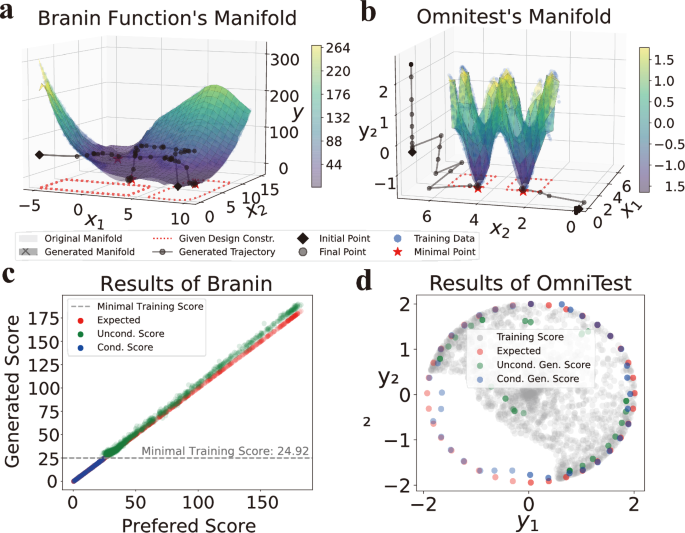

To demonstrate the bidirectional mapping capability, we visualize the performance of ManGO on two canonical minimization tasks. (i) Branin function (for SOO): A well-studied 2D function containing three global minima within x1 ∈ [ − 5, 10], x2 ∈ [0, 15] and \({y}_{\min }=0.398\), serving as an ideal testbed to capture multimodal landscapes. Specifically, \({f}_{{\rm{br}}}\left({x}_{1},{x}_{2}\right)=a{\left({x}_{2}-b{x}_{1}^{2}+c{x}_{1}-r\right)}^{2}-s(1-t)\cos {x}_{1}-s,\) where \(a=1,b=\frac{5.1}{4{\pi }^{2}},c=\frac{5}{\pi },r=6,s=10\), and \(t=\frac{1}{8\pi }\). (ii) OmniTest (for MOO): A synthetic 2D problem generating 9 disconnected Pareto-optimal points with x ∈ [0, 6]2 and y ∈ [−2, 2]2, challenging optimization methods in maintaining diverse designs. Specifically, \({f}_{1}({\boldsymbol{x}})=\mathop{\sum }\nolimits_{i = 1}^{2}\sin (\pi {x}_{i}),{f}_{2}({\boldsymbol{x}})=\mathop{\sum }\nolimits_{i = 1}^{2}\cos (\pi {x}_{i})\) with Pareto designs at all combinations of (x1, x2) ∈ {1, 3, 5} × {1, 3, 5}.

Fig. 2 shows that ManGO reconstructs the entire design-score manifold despite removing the top 40% of low-scoring data. Its generated manifold recovers the erased global minima locations and maintains the overall topographic trends in the Branin task in Figure 2a. ManGO also recovers all 9 disconnected Pareto fronts and preserves the negative correlation between f1 and f2 objectives in the OmniTest task in Figure 2b (visualizing two regions for ease of observation). Across both tasks, the generated manifolds exhibit only minimal deviations from the original manifolds, even in out-of-distribution regions. This demonstrates ManGO’s robust OOG capability, validating its ability to extrapolate beyond the training distribution accurately. Furthermore, both generated manifolds exhibit consistent minor elevation, with a deviation of less than 5%. For example, Figure 2c reveals a gradual score elevation between unconditional and expected scores in Branin’s training region, where higher expected scores correspond to sparser training samples. This conservative estimation under uncertainty serves as an advantage in offline optimization, where reliable performance outweighs aggressive extrapolation [20].

Note that unconditional and conditional samples are generated via ManGO without guidance and with preferred-score guidance, respectively. a, b Manifold and trajectory comparisons for the Branin (SOO) and OmniTest (MOO) tasks. The generated manifold is constructed via ManGO’s design-to-score prediction within the feasible region of designs. Close alignment between the ManGO-generated and original manifold, confirming the model’s proficiency in learning complex design-score relationships. Generated trajectories visualize ManGO’s score-to-design mapping under minimal score and design constraints, highlighting its capacity to perform targeted denoising toward desired regions. c Branin task: Unconditional samples (green) match preferred scores from the training dataset, while conditional samples (blue) extrapolate beyond the training minimum (grey dashed line). d OmniTest task: Conditional samples better approximate preferred scores and Pareto-dominate the training data (grey) compared to unconditional samples. These results indicate that ManGO effectively reconstructs in-distribution samples during unconditional generation—reflecting well-learned manifold structure—while enabling OOG of superior samples through conditional guidance, demonstrating robust extrapolation based on the learned manifold.

Unlike design-space approaches limited to score-based guidance, ManGO’s manifold learning framework enables additional conditioning on design constraints, providing more flexible control over the generation process. Figures 2a, b present ManGO’s generated trajectories with minimal score and varying design constraints as conditional guidance. For instance, in Figure 2a with design constraints x1 ∈ [−5, 0], x2 ∈ [0, 15] and minimum score condition \({y}_{\min }=0.398\), ManGO successfully guides a randomly initialized point (violating the constraints) to converge to the constrained minimum. Notably, ManGO exhibits accelerated convergence as noisy samples approach preferred points. This indicates its ability to exploit favorable noise points for enhanced output quality, naturally aligning with our inference-time scaling framework. On the other hand, ManGO directly transports samples from random initializations to preferred points in the joint design-score space, simultaneously generating both designs and their scores. This eliminates two key requirements of conventional approaches: (i) iterative score evaluation on noisy designs via external forward models, and (ii) gradient computation along manifold geometry.

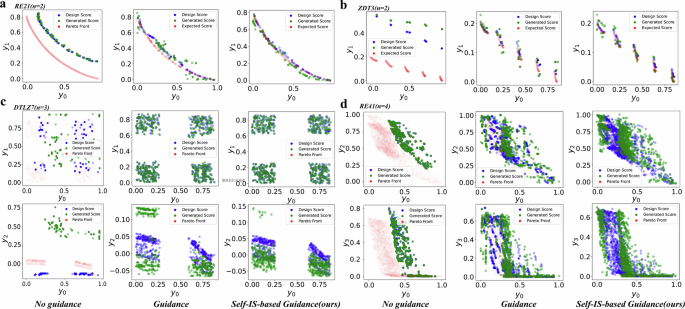

Figures 2 and Fig. 3 demonstrate the critical role of conditional guidance on ManGO’s OOG capability. Unconditional generation faithfully reproduces in-distribution samples (matching training score ranges in Fig. 2c, d), while conditional generation produces designs that extrapolate beyond the training distribution. This key distinction reveals that although ManGO learns the complete manifold structure, explicit guidance is essential to unlock its full OOG capabilities. The consistent results across RE21, ZDT3, DTLZ7, and RE41 benchmarks (Fig. 3) robustly confirm this fundamental behavior. Specifically, ManGO without guidance exhibits conservative behavior, remaining within the training distribution. Meanwhile, when guided by Pareto front (PF) reference points, ManGO approaches the complete PF, and our self-supervised scaling guidance achieves better precision than standard guidance.

Across all subfigures of (a) RE21, (b) ZDT3, (c) DTLZ7, and (d) RE41, columns from left to right respectively show the results of no guidance, standard guidance, and the proposed self-IS-based guidance. The progressive improvement in generation quality highlights ManGO’s capability in OOG under conditional guidance. Furthermore, the enhanced performance with self-IS-based guidance illustrates the ManGO’s feasibility for more delicate guidance mechanisms.

Evaluation on single-objective optimization

We employ five representative tasks from Design-Bench [6] and sample 10,000 offline design samples per task [28]: (i) Ant Morphology [38] (60-D parameter optimization for quadruped locomotion speed), (ii) D’Kitty Morphology [39] (56-D parameter optimization for movement efficiency enhancement of a quadruped robot), and (iii) Superconductor [37], (iv) TF-Bind-8 [40] and (v) TF-Bind-10 [40] (discrete DNA sequence optimization for transcription factor binding affinity with sequence lengths 8 and 10). We follow the maximization setting of Design-Bench and normalize scores based on the maximal score in the unobserved dataset [6], where higher scores indicate better performance.

As shown in Table 1, we compare ManGO with 22 baseline methods and report normalized scores of top k = 128 candidates (100th percentile). ManGO establishes a state-of-the-art performance across diverse domains (materials, robotics, bioengineering) on five datasets. ManGO with standard guidance attains a mean rank of 2.2/24 (securing the second position), while the self-supervised importance sampling (self-IS)-based variant further improves this to 1.4/24 (the first). ManGO ranks first on four tasks, including D’Kitty, Superconductor, TF-Bind-8, and TF-Bind-10, and secures second place on Ant, trailing only CMA-ES. This cross-domain advantage suggests that the effectiveness of learning the design-score manifold is general and not limited to specific problems.

The 13.6-rank leap over Mins (rank 15.0, the previous best of inverse-modeling baselines) demonstrates the superiority of the manifold-learned generation to design-space-learned methods. Meanwhile, the 2.8-rank lead over RaM (the best of forward-modeling baselines) suggests that score-conditioned diffusion can better exploit offline data than ranking-based approaches. It also outperforms the top surrogate-based methods (CMA-ES, rank 13.0) by 11.6 ranks, without the need for designing acquisition functions. On the other hand, the self-IS variant shows consistent improvements over standard ManGO: score boosts on Superconductor (+3.8%) and modest gains on Ant (+0.8%) and TF-Bind-10 (+0.9%). A deviation occurs in D’Kitty, where a slight average score reduction (−0.2%) accompanies improved peak performance (+0.3%). The marginal gains reflect that standard ManGO reaches near-optimal performance, leaving limited room for improvement.

Evaluation on multi-objective optimization

We utilize Off-MOO-Bench [14] and sample 60,000 samples per task [34]: (i) Synthetic Functions (an established collection of MOO evaluation tasks with 2 − 3 objectives exhibiting diverse PF characteristics, such as ZDT [41] and DTLZ [42]), and (ii) real-world engineering (RE) applications [43] (a suite of practical design tasks with 2−4 competing objectives, such as four-bar truss design and rocket injector design). We employ two standard evaluation metrics: (i) Hypervolume (HV)[44], which quantifies the dominated volume between candidate designs and nadir point (each dimension of which corresponds to the worst value of one objective), and (ii) Inverted Generational Distance (IGD) [45], which measures the average minimum distance between candidate designs and the ground-true PF, both metrics applied to non-dominated sorting [46] with k = 256 candidate designs (100th percentile). While generating high-quality single solutions from purely offline data remains challenging, we also report our method’s performance at k = 1 to demonstrate competitive performance. Note that while we replace the online query in MOBO/NSGA2 with a surrogate forward model for offline adaptation, performance degradation occurs versus online operation as the surrogate model cannot perfectly emulate environment feedback.

We follow the minimization setting of Off-MOO-Bench and normalize HV (IGD) values based on the best HV (IGD) of the training dataset, where higher HV (lower IGD) indicates better performance. ManGO outperforms all baseline methods across both synthetic and real-world MOO benchmarks according to Table 2. Regarding synthetic tasks, the self-IS-based ManGO achieves the best mean rankings of 2.0 (HV) and 1.3 (IGD) out of 10 competing methods, while the standard guidance version follows closely with ranks of 2.7 (HV) and 1.7 (IGD), securing the top two positions. The superiority extends to RE tasks, where self-IS-based ManGO dominates with average ranks of 1.3 (HV) and 2.0 (IGD), establishing itself as the overall leader.

As presented in the upper part of Table 2, ManGO shows consistent superiority across ZDT, OmniTest, and DTLZ series. Under the most challenging OOG scenarios where preferred designs are distant from ZDT’s training data, self-IS-based ManGO outperforms the best baselines by 60.1% in IGD (vs DDOM) and 3.9% in HV (vs NSGA-2). For 1-shot evaluation settings (i.e., k = 1), ManGO matches the performance of baseline methods requiring 256-shot evaluations. This shows that the efficient learning on the design-score manifold enables high-quality guidance generation even with minimal sampling.

As task complexity escalates with increasing objectives, the self-IS-based variant consistently achieves top performance in both HV and IGD metrics in the lower part of Table 2. This confirms its OOG capability in high-dimensional objective spaces. Compared to synthetic tasks, learning on manifolds poses greater challenges for RE tasks. Consequently, this diminishes ManGO’s performance advantage in 1-shot and standard guidance modes. However, self-IS guidance effectively offsets this by exploring more noise points, and its performance gains become more pronounced as task complexity increases.

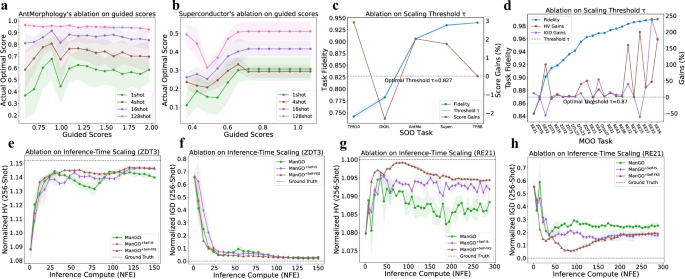

Ablation study on robustness to preferred scores

Although we use the maximum unobserved score as a guided score (i.e., yp = 1.0) in Table 1, practical scenarios may lack precise knowledge of optimal scores. We evaluate ManGO’s robustness to suboptimal guided scores by analyzing the best score of generated candidate designs across varying shot numbers. Fig. 4a, b reveal two key insights: (i) Increasing the shot number enhances robustness, with stable optimal designs emerging when guided scores exceed 0.7 (Ant) or 5.5 (Superconductor) at 128 shots. (ii) Decreasing the shot number to 1, peak performance occurs near but not exactly at yp. This demonstrates that the generation diversity of diffusion models provides practical robustness for ManGO when yp is unknown.

a, b Flexibility to guidance specification: Performance sensitivity to deviations between guided scores and true optima on Ant (a) and Superconductor (b) tasks, demonstrating ManGO’s stability under suboptimal guidance conditions. c, d Fidelity-adaptive scaling: Performance gains relative to baseline fidelity thresholds in offline SOO (c) and MOO (d) tasks, with dashed lines indicating empirically optimal thresholds (τopt = 0.827 for SOO; τopt = 0.87 for MOO). e–h Inference-time scaling efficiency: HV (e, g) and IGD (f, h) versus number of function evaluations (NFE) for ZDT3 (e, f) and RE21 (g, h) task for three approaches, comparing standard denoising, self-IS scaling, and FKS scaling methods. Consistent performance improvements are achieved through adaptive noise-space exploration.

Ablation study on optimal fidelity threshold

We quantitatively evaluate the design-to-score prediction accuracy through the fidelity metric (Eq. (10)), which measures the distance between the ground-truth scores and the generated scores of unconditional samples. Figures 4c, d show that self-IS-based scaling achieves performance gains on the majority of SOO (optimal fidelity threshold τopt = 0.827) and MOO tasks (τopt = 0.87). Furthermore, MOO tasks exhibit higher fidelity than SOO tasks due to SOO’s higher-dimensional design spaces. It is more likely to increase performance gains with increasing fidelity values because diffusion models with higher fidelity generate more accurate self-reward signals during inference, enabling more effective noise-space exploration.

Ablation study on inference-time scaling

We evaluate computation-performance tradeoffs by controlling NFE on ZDT3 in Figures 4e, f and RE21 in Fig. 4g, h, comparing three approaches: (1) standard guidance with more denoising steps, (2) self-IS-based scaling, and (3) Feynman-Kac-steering (FKS)-based scaling. For ZDT3, standard guidance achieves competitive performance at NFE = 31, demonstrating ManGO’s sample efficiency. However, performance degrades at intermediate NFE before recovering, revealing instability in simple step extension. In contrast, scaling-based methods show monotonic improvement with increasing NFE, where FKS scaling temporarily outperforms IS scaling during mid-range NFEs before converging at NFE = 150. The RE21 task exhibits different characteristics: all methods display bell-shaped performance curves, peaking at NFE = 78 (FKS), NFE = 36 (IS), and NFE = 36 (standard). Scaling-based methods attain higher peak performance over standard guidance while maintaining a sustained advantage after NFE = 36. These results indicate that scaling methods yield superior computational performance compared to simple step extension.

link